Back in March 2016, fast approaching the 1 year ago mark, Cisco announced their journey into the HyperConverged space with HyperFlex, a solution leveraging the software defined storage technology from SpringPath. I wrote about this announcement back then here – http://bit.ly/1ngMmb6

Some of the key figures announced today were that over 70PB of storage has been sold as part of this solution and there around 950 new customers enrolled to the HyperFlex offerings.

As I covered in my launch post regarding HyperFlex, the differentiators being around the Integrated Network Fabric for that one stop shop for Compute, Storage and also Networking. The ability to integrate with existing Cisco Data Center tools.

What’s new in HyperFlex 2.0

I would suggest that a lot of the new features are just table stakes, such as non disruptive rolling upgrade in this day and age of the hyperconverged offering this has to be a table stake along with the simplicity of configuration and deployment. A given.

The next key new feature is the All Flash option now with the HyperFlex again you can see the paragraph above on this one. However it’s still noteworthy to point this one out.

All Flash I think this had to be just a matter of time, the world is absolutely moving this way and the Hyper Converged space is no different at all.

Generally speaking when we speak of All Flash we expect the “High Performance” the high IOPS, the consistent high throughput ability whilst keeping a low latency. What we don’t want to see is having to compromise against certain storage efficiencies. With 2.0 and the All Flash release you are not going to lose the Always ON, Inline Deduplication and compression.

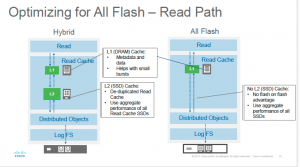

We have seen from many storage vendors specifically Log Structured File Systems, which coupled with Flash will give a write optimised data path to help with compression and SSD wear.

Dynamic Data Distribution gives the ability to leverage the performance of all the SSDs which in turn spreads the wear and performance.

You will see from above in pre All Flash that they still leveraged some SSD for performance reads and deduplication tasks, this is simply soaked into the already present SSD tier in the All Flash options.

Another addition is the ability to now leverage the 40Gb 3rd Generation Fabric Interconnects, more details can be found here on those – http://blogs.cisco.com/datacenter/fast-and-flexible-new-third-generation-fabric-interconnect

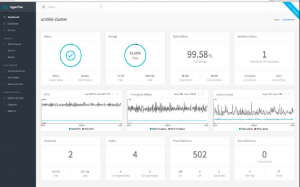

HTML 5

With this new firmware release we also see a nice new shiny HTML 5 web interface for the native management of the HyperFlex systems.

- New Web GUI for direct management

- Simple and Smooth interface using HTML 5

- Manage HX Cluster and Datastores

- View Alarms, Events and Performance Charts

- View VM inventory and resource pools

As i said in the opening the table stakes for the HyperConverged offerings should already include these new features, but its great to see that so much is being added in to the product set at only version 2.0.

The Big Easy button for setup and deployment is a must in this modular based approach to deploying infrastructure. Some of the improvements are listed below:

- Improved Day 0 Experience

- Integrated UI Workflow

- Improved Validations

- Single Configuration File

- UCSM

- Deployment

- Cluster Creation & Expansion

On the flip to that the topical trend in our industry at the moment is around APIs and the new HyperFlex firmware boasts a range of new features and functionality when it comes to their API approach:

- New RESTful API with HTTP verbs

- Enables configuration, monitoring, queries

- Management via an on-demand stateless protocol

- Allows for external applications to interface directly with the HX management plane

- Basis for enhanced policy driven integrations with products such as UCS Director.

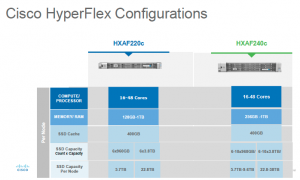

Lastly i wanted to touch on the Options and Configurations available the Hybrid approach has not gone away as you can see below with the ability to still have a 22TB node but now with the All Flash array you have the ability to have a close to 40TB node with All Flash.